Main Results

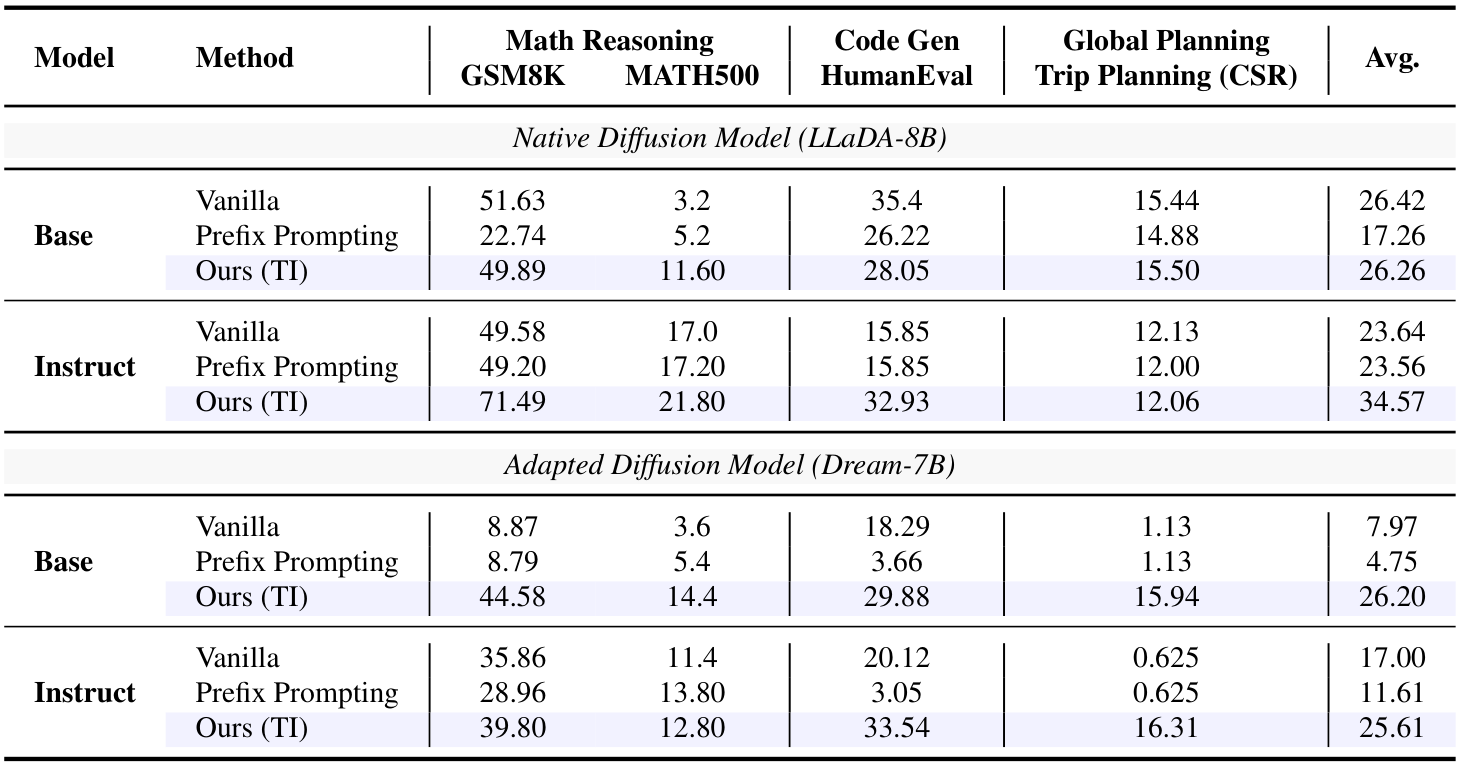

TI improves reasoning, coding, and planning performance across both native diffusion models and adapted diffusion models. The paper reports an average gain of 9.40 percentage points over the baseline, with especially large improvements on instruction-following and Dream-7B base settings.

The main results compare vanilla decoding, prefix prompting, and Template Infilling across GSM8K, MATH500, HumanEval, and Trip Planning. The table shows that structural conditioning is consistently more effective than standard prefix prompting for both LLaDA-8B and Dream-7B families.